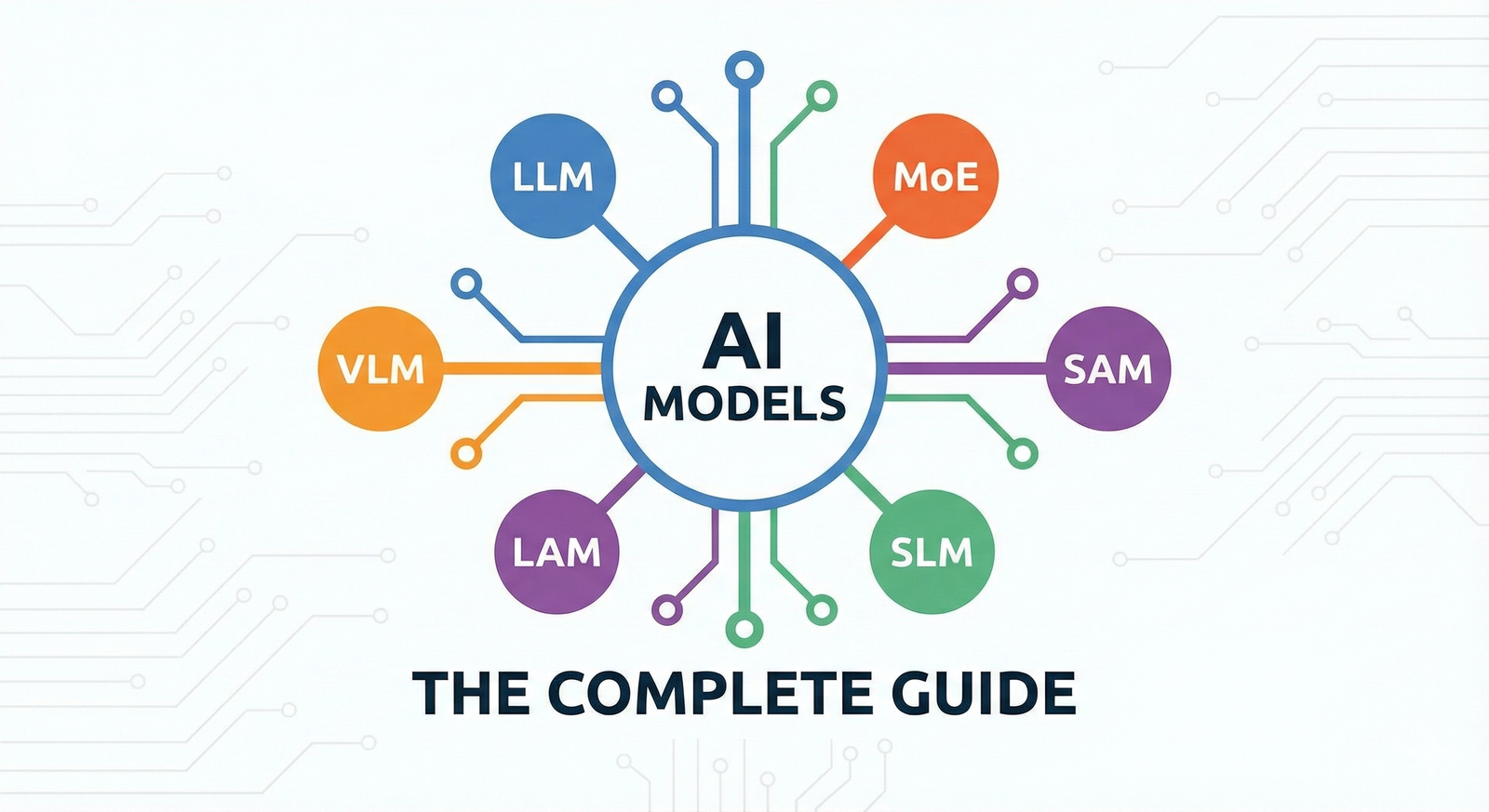

LLM, VLM, SAM, MoE: The Complete Guide to AI Model Types

The alphabet soup of AI explained simply. We break down the 8 distinct model architectures—from the "Digital Interns" (LAMs) to the "Pocket Guides" (SLMs)—and exactly when to use them.

Frank Koziarz

The AI landscape has exploded with acronyms. LLM, VLM, SAM, MoE—it's easy to get lost in the alphabet soup. But here's the reality: these aren't just marketing terms; they represent fundamentally different ways of thinking and solving problems.

The 8 Model Types at a Glance

Before we dive into the mechanics, here is your cheat sheet for what each model actually does.

| Acronym | Model Type | The Analogy |

|---|---|---|

| LLM | Large Language Model | The Autocomplete on Steroids |

| MoE | Mixture of Experts | The Team of Specialists |

| VLM | Vision Language Model | The AI with Eyes |

| LAM | Large Action Model | The Digital Intern |

| SAM | Segment Anything | The Digital Scissors |

| LCM | Large Concept Model | The Universal Translator |

| SLM | Small Language Model | The Pocket Encyclopedia |

| MLM | Masked Language Model | The "Fill-in-the-Blank" Solver |

1. LLM: The Foundation

Think of it as: Super-powered Autocomplete.

You use this every day (ChatGPT, Claude). An LLM is trained on a massive chunk of the internet to do one simple thing: predict the next word. But because it has seen so much text, it learns logic, reasoning, and creativity just to make better predictions.

How LLM Works

Predicts the most likely next token based on context

2. MoE: The Manager (Mixture of Experts)

Think of it as: A Hospital with different departments.

Standard models are like a general practitioner trying to know everything. A Mixture of Experts (MoE) model is a hospital containing a cardiologist, a neurologist, and a pediatrician. When you ask a question, a "Router" decides which expert is best suited to answer it. This makes the model much faster and smarter because it doesn't use its entire brain for every simple question.

How MoE Routes Queries

Only activates relevant experts—massive scale, efficient compute

3. VLM: The Eye (Vision Language Model)

Think of it as: Giving ChatGPT a pair of glasses.

Standard LLMs are blind—they only know text. A VLM adds a visual encoder (like a digital retina) that converts pixels into concepts the AI can understand. This allows the model to "reason" about what it sees, letting you show it a picture of your fridge ingredients and ask for a recipe.

VLM Dual-Encoder Architecture

4. LAM: The Doer (Large Action Model)

Think of it as: A Digital Intern using your mouse and keyboard.

While an LLM can write an email, it can't send it. It is trapped in a text box. A Large Action Model (LAM) is trained to understand user interfaces—buttons, search bars, and menus. Instead of replying with text, it replies with actions: "Click 'Buy Now'", "Type 'Pizza' in search", or "Scroll Down".

LAM: From Intent to Action

Outputs executable actions, not just text

5. SAM: The Editor (Segment Anything Model)

Think of it as: Magic Scissors.

This isn't a chatbot. It's a visual tool. You show SAM a picture of a crowded street and click on a single person. SAM instantly understands the shape of that person and cuts them out perfectly from the background. It is the engine behind modern photo editing tools.

SAM Segmentation Flow

6. LCM: The Translator (Large Concept Model)

Think of it as: The Babel Fish.

Language is messy. LCMs don't translate word-for-word. They convert a sentence into a "Concept"—a mathematical representation of the idea itself, stripping away the language entirely. Once the idea is captured, it can be instantly expressed in French, Japanese, or even code.

LCM: Language-Agnostic Concept Space

Same concept vector decodes to any language

7. SLM: The Efficient One (Small Language Model)

Think of it as: A curated textbook vs. the entire internet.

LLMs are huge and require massive server farms. SLMs are tiny. How? Instead of training them on the messy, garbage-filled internet, researchers train them on highly curated, textbook-quality data. The result is a smart model that fits on your phone, preserving your privacy.

SLM: On-Device AI

8. MLM: The Analyst (Masked Language Model)

Think of it as: Solving a crossword puzzle.

Generative models (like GPT) write forward, one word at a time. MLMs (like BERT) look at the whole sentence at once. They cover up (mask) random words in the middle of a sentence and try to guess them based on context. This makes them incredible at understanding the deeper meaning and sentiment of a document.

MLM: Bidirectional Context

Uses full context to understand meaning, not generate text

The takeaway? Modern AI isn't a single monolith. It's a toolbox. The most advanced systems, like GPT-4o, are actually hybrids—combining the reasoning of an LLM, the vision of a VLM, and the routing efficiency of an MoE.

Frank Koziarz

AI analyst and tech journalist covering the latest in artificial intelligence.